- About

- Solutions

- Essentials

- Utilities

- Publications

- Product Delivery

- Support

Application capacity defines how many users an IT application service can support in a given response time. Another critical factor is the application’s ability to scale, which is dependent upon the application’s architecture.

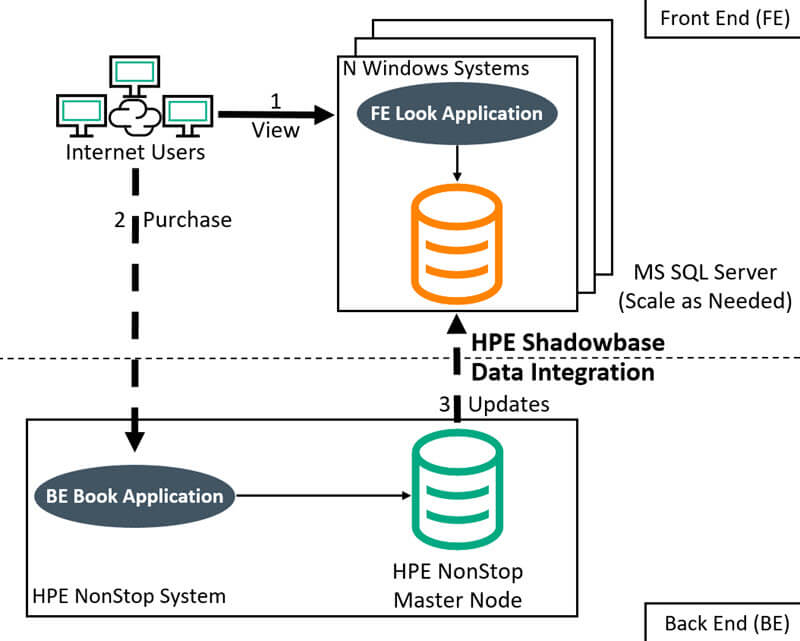

The FE platform is processing “looking” activity that is typically light on hardware resources. In this case, the FE only needs to be able to rapidly service search or view requests, which can be accomplished with lightweight, inexpensive commodity servers, each partitioned to handle a small subset of users.

If a server fails, users connected to that system are simply rerouted to a remaining FE server. Scaling is simply handled by adding more servers.

In contrast, the BE system needs to be robust, highly-available, highly-scalable, and optimized for database transaction processing (such as the world-class HPE NonStop server). The BE system needs to process thousands of database updates per second, and maintain database consistency.

For example, in airline and travel reservation systems, users spend hours viewing and analyzing flight, hotel, and rental car information before booking a reservation. The number of “looks” far exceeds the number of “books” – the so-called look-to-book ratio. To optimize this activity, IT resources service “looking” activity with a FE process that is different than a “booking” BE process. This is an example of Application Capacity Expansion using “Asymmetric Capacity Expansion.”

Figure 1 — Shadowbase Data and Application Integration in an Asymmetric Capacity Expansion

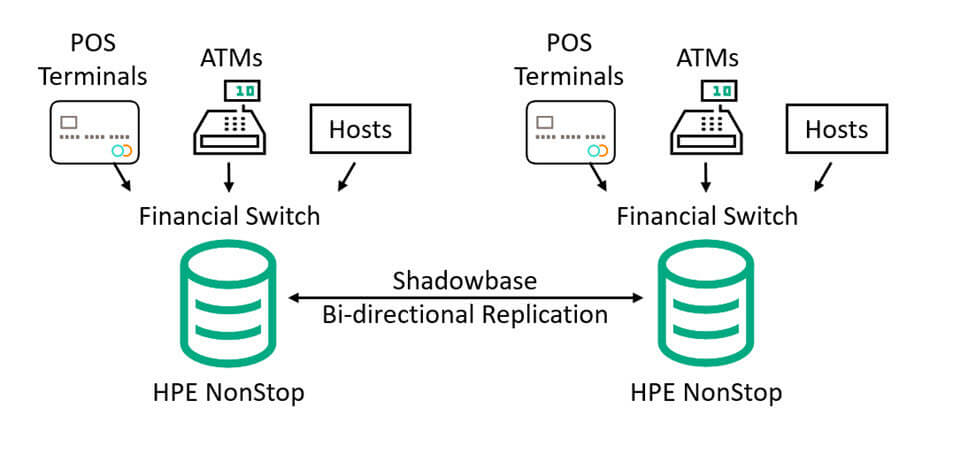

Another example is a “Symmetric Capacity Expansion.” This architecture is similar to an Asymmetric Capacity Expansion. The main difference is that capacity is increased by scaling out to similar servers. Usually, the data is homogeneous (like-to-like) in nature.

Figure 2 illustrates a Symmetric Capacity Expansion. A major financial switch offering POS, ATM, and host processing can increase capacity by adding more HPE NonStop servers into its architecture and using HPE Shadowbase software to ensure that the data remains bi-directionally synchronized in real-time.

Figure 2 — Shadowbase Bi-directional Data Replication for a Symmetric Capacity Expansion

IT teams have flexibility in scaling options with the type and number of platforms/databases employed to host the application’s processes by simply dividing the application’s processes into a FE and BE.

ACE typically lowers the Total Cost of Ownership (TCO) for a given solution versus the traditional method of maintaining a few large servers servicing monolithic applications.

In a distributed architecture, scaling is easier and application availability is also improved. Unfortunately, many legacy applications remain on several large servers and face scaling problems. Migrating these monolithic applications to a distributed architecture is known as an application capacity expansion (ACE).

Exchanging data between the various parts of the application requires a physical network connection and a logical connection. In the travel reservation system mentioned above, once the end user finishes the “looking” phase and books a reservation, the FE application must send the “booking” request to the BE, and return a response back to the user. HPE Shadowbase data and application integration software transforms the booking updates into a format that is then sent to the various FE Servers, and updates them with sub-second latency, automatically updating booked rooms from the reservation catalogue.

Shadowbase Business Continuity software can be used to enable continuous availability, should the backend BE environment fail. This adds in redundancy and ultimately the element of reliability. Scalability is possible since Shadowbase software supports parallelism to scale with an increasing workload.